There’s a difference between having a lock and having a door that can resist forced entry.

Most security teams pride themselves on having a significant number of locks but the pivotal question that most shy away from and that rarely gets asked directly – would those locks hold against a determined adversary who’s actually trying to get in?

For many, the easy explanation for this gap between the presence of controls and a truly secure posture is technical incompetence.

I find this to be a convenient framing, one that allows us to assume that some combination of better tools, more training or different processes is the only required fix. The appeal is understandable. It allows organizations to avoid a harsher possibility, one where the gap persists not due to lack of capability, but from how organizations choose to operate and how we enable systems that reward the appearance of security over its substance.

As uncomfortable as that diagnosis may be, it leads us somewhere more useful. It forces us to acknowledge that we’re dealing with a problem at the intersection of three disciplines: decision science, which studies how we make choices under uncertainty; organizational behavior, which examines how incentives, culture, and power structures shape what people actually do; and cyber risk management, which is the technical domain where these dynamics play out.

If we want to understand why security programs so often fail to deliver actual security, we need to see this as a human systems problem that happens to express itself technically.

Contents

The Framework-First Inversion

In theory, you would expect a security program to be built like this: understand the threat landscape → design controls that create asymmetric cost for those specific threats → organize and measure those controls → demonstrate compliance as a byproduct.

In practice, it tends to go the other way. Organizations select a compliance framework, implement controls that satisfy the framework, then map threats to existing controls after the fact. Finally, declaring the result “risk-based” and “threat-informed.”

This isn’t surprising. Decision science gives us a clear explanation:

Frameworks are cognitive shortcuts. What they do is allow us to transform inherently overwhelming questions such as “how do we secure all of our organization against a number of dynamic adversaries?” into a manageable one such as: “which requirements do we need to satisfy?” [1]

Where there is genuine complexity and uncertainty, heuristics like this one are understandable and even rational.

The problem here isn’t the frameworks/standards themselves though. ISO 27001, NIST CSF, CIS Controls and similar frameworks/standards all represent reasonable baselines developed by communities of experienced practitioners. But these are context-agnostic by design. They have been built to be applicable across hospitals, tech startups, financial institutions, and retail chains. This universality is both their value and, when misapplied, their fundamental flaw.

This becomes clear if we consider a large retail bank and a large general insurer. Both operate in financial services. Both might pursue ISO 27001 certification with similar security architectures. But they face meaningfully different adversaries with different motivations, capabilities, and TTPs. A criminal group interested in payment card data and a sophisticated threat actor pursuing long-term access to policy data require quite different defensive approaches. A framework alone here does not allow us to distinguish between them. To achieve this, we need rigorous threat modelling conducted before finalizing control design.

When organizations treat these frameworks as design specifications rather than a baseline coverage checklist, the result is controls that are present but not tailored to the actual threat landscape that is relevant to their operating environment. And because the compliance requirements considered to be best practice are satisfied, neither the organizations nor their regulators notice the problem until someone tries to get through the proverbial door.

The Feedback Failure

The framework-first approach might be tolerable if only organizations had strong feedback loops that revealed when controls weren’t working. Unfortunately, many don’t.

But this isn’t just about an absence of feedback. We also have to consider more insidious combinations where feedback exists but gets misinterpreted, or feedback is deliberately tuned to confirm existing assumptions.

Security teams understandably celebrate what look like wins: phishing emails caught by filters, vulnerabilities patched before exploitation, login attempts blocked. The problem here is that metrics such as these mostly reflect resilience against unsophisticated, opportunistic attacks. They provide little to no insight about performance against a capable, motivated adversary with time and patience.

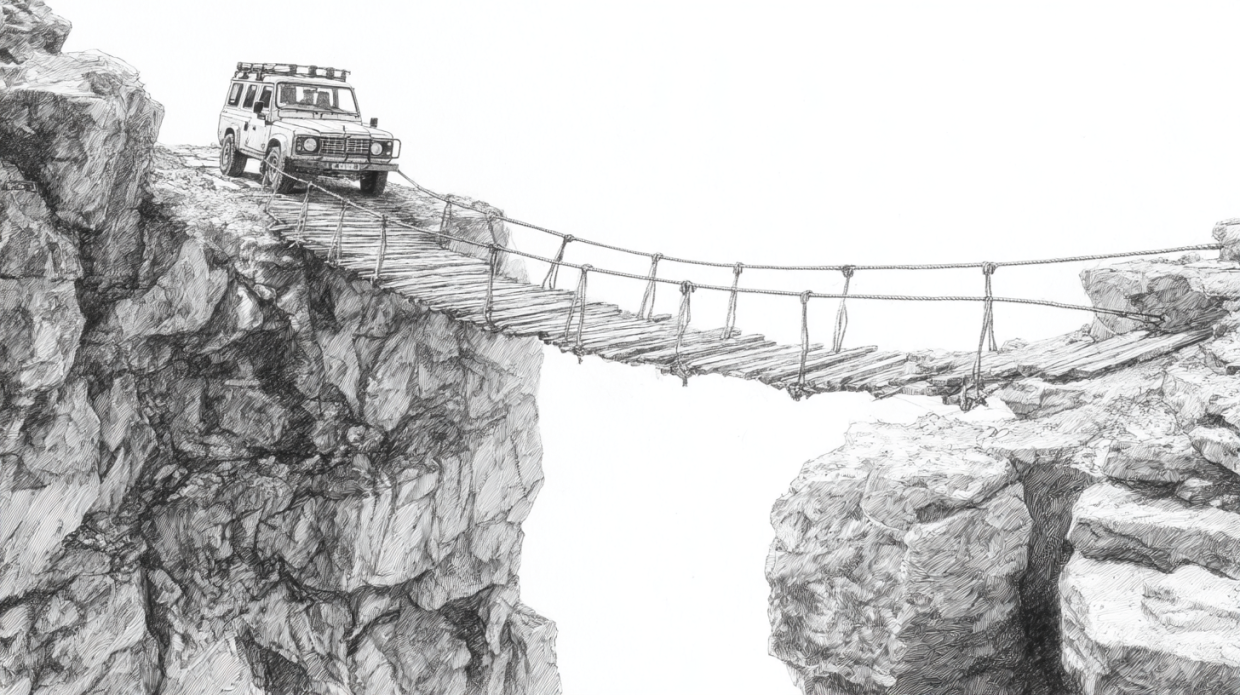

To draw a parallel, a bridge might handle daily traffic without visible strain while harboring structural weaknesses that only appear under earthquake conditions. The feedback from the normal load does not tell you whether the bridge will hold under adverse load.

A reasonable objection here is that organizations do not have the benefit of waiting for a sophisticated attack to learn whether controls work. I’m fine with this and for this reason we have alternatives to simulate adversary behavior: red team exercises, purple team engagements, rigorous penetration testing with realistic scope and objectives. These approaches exist precisely to create the feedback that normal operations don’t provide.

But as I’ll point out below, these approaches are frequently structured in ways that prioritize organizational comfort over confrontation, limiting their ability to expose deeper weaknesses. The 2021 Illuminate Education breach is instructive here – a third-party vendor flagged high-risk vulnerabilities in 2020, threat detection tools were in place, and the feedback was available. None of it was acted on and an attacker eventually accessed their systems using credentials that had belonged to a former employee for over three years.

Compounding the feedback problem is the way organizational incentives distort what gets reported. In environments where leadership wants to hear about progress, security teams respond rationally by reporting progress. They do this by emphasizing positive metrics, framing findings optimistically, and providing information that creates a sense of forward momentum. When boards and executives lack the domain knowledge to ask hard questions, this reporting style goes unchallenged.

This dynamic extends to the operational level, where decisions about configuration baselines, privilege models, logging verbosity, patch cadence, and monitoring tuning sit fully within the remit of IT and security teams without requiring board approval. In the absence of effective feedback, these decisions tend to get optimized for operational convenience rather than security effectiveness. Excessive permissions get granted upfront because they reduce help desk tickets. Broad policy exceptions get approved because enforcement creates friction. Audit findings get “risk accepted” indefinitely because remediation might break something.

The harsh reality is that many organizations are not optimized for the feedback they need. Rather, they are optimized for the feedback they can tolerate

The result is predictable in that security teams surface metrics that are convenient, measurable, and defensible. At the same time, the metrics that would truly test resilience remain out of view.

Reporting Theater

We see this feedback failure most clearly in security reporting.

Instead of conveying an accurate picture of risk, reports are shaped to maintain leadership confidence by narrowing what is communicated and curating how it is presented.

With reporting theater, the patterns tend to be familiar. Internal dashboards show metrics like “94% of critical systems patched within SLA,” “phishing click rate decreased 40% year-over-year,” or “zero critical assessment findings.” These may all be factually accurate. They’re also carefully selected to create narrative closure, providing the impression that security is being handled competently without requiring leadership to engage with uncomfortable specifics.

The follow-up questions that would reveal whether these metrics actually mean anything rarely get asked: What defines “critical systems” and are the SLAs appropriately tight? Is the decline in phishing clicks genuine behavior change or increasingly obvious simulations? Do zero critical findings reflect real risk reduction, or convenient scoping that keeps findings manageable?

This is a classic principal-agent problem. Leadership wants actual security and security teams are supposed to provide it. However in environments where leadership can’t directly evaluate security quality, they rely on reports. If reporting structures reward positive narratives, agents rationally optimize for positive narratives. The information asymmetry persists, and it extends outward as seen with annual reports, regulatory submissions, and security questionnaires all of which are crafted to systematically obscure actual exposure from every stakeholder who might care about it.

The Testing Paradox

One of the most revealing manifestations of this dynamic is in how security assessments get designed and executed.

Penetration tests, red team exercises, vulnerability scans, and compliance audits can provide genuine feedback. Some in the security community will push back here, but organizations regularly undermine the effectiveness of these tests/exercises by constraining them in ways that limit how assessments are performed, who reports can be shared with and how seriously the results are taken.

These constraints almost never look like sabotage and are more likely to show up as routine, reasonable decisions:

- Legacy systems get excluded from testing because “we know they’re problematic and we’re planning to replace them“. However replacements rarely happen on schedule while those same systems remain in production.

- Assessments target specific applications while excluding underlying infrastructure, integrations, and third-party connections which are critical components of the actual attack surface.

- “Critical findings” are defined in ways that align with what is already budgeted rather than what would actually matter to an adversary.

- Remediation happens just well enough to show progress, without asking whether the fixes actually improved resilience against realistic attack paths.

The result is a closed loop: weak assessments produce manageable findings, which get partially remediated, which justify claims that security is “mature and improving,” which reduces urgency for deeper investment, which perpetuates reliance on fragile controls, which necessitates keeping assessments safely scoped.

This is the testing paradox, one where rigorous adversarial simulation could create the feedback loops that reveal control fragility but organizations avoid genuine rigor precisely because they fear (whether consciously or not) what they might discover.

The feedback mechanism exists but is deliberately constrained to avoid the uneasy reality that it might expose. To go back to our bridge analogy… it never gets load-tested.

Why It Persists

It’s fair to ask why the problem persists. The answer lies in how individual rationality can produce collective dysfunction.

The reality is security professionals often know when, and to what extent, their organization’s controls are largely theater. They may want to change it, but doing so carries professional risk. A CISO who insists on transparency about control failures may be replaced by someone more willing to manage perceptions. The analyst or engineer that documents fragility honestly and without reservation gets labeled “not a team player.” The board member who asks uncomfortable questions gets accused of not being technical enough to understand or is told they’re micromanaging.

This is the textbook definition of a collective action problem. All of us might be better off in a world with honest security reporting and rigorous assessment, but any individual who moves first toward that world bears the cost disproportionately. So the equilibrium persists, not because we deliberately designed it this way, but because the incentive structures, information flows, and feedback mechanisms we work with all reinforce it.

We see a similar dynamic playing out in vendor selection. Where buyers can’t evaluate security quality directly, they use certifications and attestations as proxies. A vendor with genuine investment in threat modeling and resilience engineering but without the right attestation badges may lose deals to a vendor with undeniably weaker security but impressive attestations. The market selects primarily for legibility over effectiveness and this is economically rational behavior by buyers under information constraints. Unfortunately, on a large scale it produces systematically poor security outcomes.

The challenge is further compounded by leadership visibility. Regulators, executives and board members often lack the background to evaluate security claims directly. They ask about compliance status, certification achievement, and high-level metrics. Security teams respond rationally by providing exactly that. Regular reports showing compliance and positive indicators are received and the recipient concludes that appropriate oversight is being exercised, and remains genuinely blind to systemic fragility. None of this happens through willful negligence, but through honest mistakes enabled by information asymmetry.

What Would Actually Change This

There’s no magic bullet or single lever to address this. What we have is a systems problem, and addressing systems problems require coordinated pressure from multiple directions.

At the board and governance level, the most immediately actionable change is in the questions that get asked. We need a shift from “are we doing security things?” to “would our security things actually work when tested?”

Instead of “what’s our patch compliance rate?”, ask “show me the critical assets that aren’t patched and explain what would happen if an adversary compromised one.” Instead of “did we pass our audit?”, ask “what did our most recent adversarial testing reveal about control failures?”

This doesn’t require boards to become technical experts but it does requires them to insist on answers that describe performance under stress rather than presence of controls. And it means requesting that red team or penetration test findings reach the board directly, and are not filtered through management summaries.

At the regulatory level, current frameworks largely codify process compliance: do you have a plan, do you conduct training, do you patch within defined timeframes. This reinforces the framework-first approach because process compliance becomes the goal.

More useful regulatory approaches would require adversarial testing with results reported to regulators, not just conducted internally, along with disclosure of detection and containment metrics from simulated incidents. The EU’s Digital Operational Resilience Act (DORA) already moves in this direction with its digital operational resilience testing requirements. Other jurisdictions, particularly where regulatory pressure has significant influence over institutional behavior, have an opportunity to follow and potentially leapfrog the approaches of larger markets.

At the professional level, the most structural change would be giving security leaders the institutional independence to act honestly against organizational pressure. When CISOs report through CIO structures, there’s an inherent conflict when security requirements create friction with service delivery goals. If more organizations allowed for reporting lines to the board or CEO, with independence protections similar to the internal audit function, this would also be a significant change.

The need for this is more pressing than we as a profession likes to admit and Splunk’s 2025 CISO Report which found that 21% of CISOs had been pressured not to report a compliance issue shows this. This is indicative of a structural problem and unless we provide structural protection, the individual cost of honesty will continue to be too high for the collective norm to shift.

While none of these interventions alone will break the equilibrium, together, they allow us to attack it from multiple directions: governance pressure makes boards uncomfortable with comfortable narratives; regulatory requirements shift what “compliance” means toward demonstrated resilience; professional protections reduce the personal cost of honesty.

The goal here is to gradually make security theater more expensive and genuine security more legible.

Where to Start

The systemic changes above will take time, but individual choices are available today.

For security leaders, the immediate question is whether you can introduce one uncomfortable truth into the next board or executive report. I am not advocating the use of panic but the inclusion of at least one data point that reveals fragility the current reporting may gloss over.

For example – “We have 98% patch compliance overall, but 27 critical assets remain unpatched due to operational constraints.” You can go on to explain what those assets can access and what a compromise would mean. That’s the beginning of training leadership to expect and value uncomfortable honesty over comfortable theater.

For members of the board or risk committee, the question is whether you’re asking about control presence or control performance and the distinction matters more than it might appear.

For example – “do we have an incident response plan” and “would our incident response plan work against a realistic attack scenario” are very different questions. Insisting on answers to the second type of question, and requesting that assessment findings reach you unfiltered can change the reporting the organization produces.

For risk & security practitioners, the most useful thing we can do is treat security claims as hypotheses that require evidence rather than conclusions. “We have MFA deployed” is not the same as “our MFA implementation resists common bypass techniques, and we’ve verified this by testing.” Stopping at the first and not demanding what the second requires is precisely how the gap stays hidden.

Leave a Reply